Modular Object Framework Organizer

MOFO is the base code for a robust C++ program.

It provides

a Persistent Store, Version Control, Crash Protection, and Checkpointing.

The goal of the framework is to allow a program to keep running WHILE

it's being developed. Versions are tracked, live updates replace

running code on-the-fly, buggy versions are reverted to previous

working code, and program data stays in a persistent store which

sticks around even if the program crashes and which can be

snapshotted (checkpointed) coherently to disk in the background.

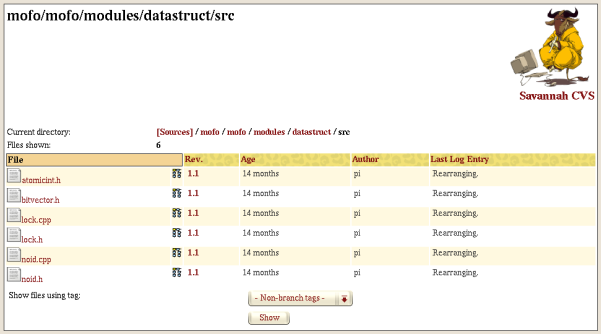

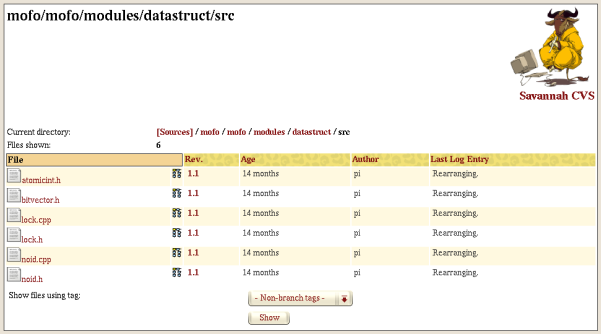

CVS Repository

Note: new version 0.5 to be added soon

How It Works

The MOFO base code loads a single DLL (shared library) which contains the

core functionality of the system (DLL manager, signal handler, persistent

memory manager, event scheduler, etc.); the DLL manager then loads the

libraries containing your application-specific code.

When new code is developed for a module, the main process clones itself, with the clone

acting as a watchdog. The main process then closes the module's old DLL and loads the

new one. The program continues running with the new code in place. In case of an

unexpected exit caused by the new code, the cloned watchdog process takes over and

becomes the new main process: It has all the executable code (including the previous

version of the module DLL in question) and is attached to the same persistent

shared memory store.

The following process is used:

|

Persistent Store:

MOFO allocates a large segment of

shared memory. An additional shared memory segment

of equal size is also allocated and used for snapshots (the backup). Shared memory remains allocated regardless of

whether any processes are using it, unless explicity destroyed.

This means that even if the main program crashes, the data remains

in memory instead of disappearing the way it would with a conventional application.

All program data resides here, and multiple processes can share it

simultaneously, taking advantage of SMP without using threads.

(This tends to simplify debugging.)

| |

Version control:

MOFO keeps track of different versions of

the core code and loadable modules (DLLs).

This is used in:

| |

Crash Protection:

A Clone process is started before each

load of new module code and acts as a watchdog. The main process then loads and runs the new

code. If the main program crashes, the Clone marks that module

version as unstable and then simply resumes execution starting

with the next task in the scheduler, resulting in almost no downtime

(assuming no data corruption; otherwise, revert to last checkpoint).

If the Clone needs to revert any of its own code, it starts up the

most recent stable version listed in version control. Any new

process gains access to all the data by attaching to the

persistent memory store, so no data needs to be reloaded.

| |

Snapshotting (Checkpointing):

MOFO periodically creates a

snapshot of its entire memory space. This is done instantly as a

memory-to-memory copy from the main segment to the backup segment. (The actual speed depends on the amount of

memory used and the memory bandwidth of the machine.)

The snapshot image is then written to disk as a background process by saving the complete backup segment as one large file,

while the main process continues processing and modifying the main shared memory segment.

This allows:

| |

Completely coherent backups:

An entire cluster can be snapshotted

simultaneously. A broadcast message is sent to all nodes, which pause,

verify that all nodes are paused, and then all nodes snapshot and

unpause. The duration of the pause is negligible, as the control/sync commands

are sent as high-priority messages on a separate network interface (preferred).

Backups can be done quite often; saving to disk is very rapid as one

large binary piece of data is written to disk with no parsing.

This allows:

| |

Cluster-wide coherent restores from snapshots::

If data corruption or loss indicate that a reversion to a prior known "good"

snapshot needs to take place, all nodes can reload a previous memory

image from disk. This is true even after a reboot, as the image will be

mapped into the same virtual address space as before. All nodes then

proceed just as they did when that snapshot was originally created --

unpausing and continuing. With additional work, any clients connected to

MOFO servers can also revert gracefully.

Snapshots can also be converted to XML. This allows architectures with

a different word size or endianness (e.g. a 64-bit CPU) to run a foreign

snapshot. Another useful capability is that while converting to XML, invalid

pointer references can be uncovered which allows corrupt data segments to

be identified and discarded. Otherwise repeated segfaults could cause

spurious code reversion of the module that hit the bad pointer rather

than indicating the underlying problem.

|

Status

| 2003-07-03: |

Development version 0.5 will start to make its way into CVS soon.

All core functionality returns to the initial module; 0.4 was an excursion into needless

housekeeping. The 0.5 initialization sequence avoids race conditions with multiple instances

running, assuming the kernel IPC code is also race condition-free.

Additions include (already completed)

shared memory data journalling for atomic sets of actions. This prevents

data corruption and generally obviates the need for critical sections;

operations are written to the journal, then the journal executes

all of them. If the journal didn't get completely written, no actions occur; if

the process is killed during journal playback, upon restart it will replay all

actions. A journalling version of the optimizing tree class is included; journal

overhead is about 1.7 microseconds for an add or delete in slower batch mode (copying to

the main journal to allow nested journal usage, instead of direct mode; dual Athlon MP 1600+, PC2100).

Journals are unique per process/thread to prevent third-party journal munging.

|

| 2003-06-09: |

Working on development version 0.5; .tar.bz2 snapshots not in CVS.

|

| 2002-03-12: |

Codebase is about 2500 lines (CVS plot's numbers are twice as high; it counts blank lines).

|

| 2002-03-06: |

Merging in code from previous incarnation.

|

| 2002-02-26: |

Code is in CVS

|

|